The ultimatum arrived without ceremony. The United States Department of Defense – rebranded as the “Department of War” by the current administration – issued Anthropic a stark choice: grant unchecked military access to its Claude AI models, including for mass domestic surveillance and fully autonomous lethal weapons with no human oversight, or risk being designated a “supply chain risk” and losing hundreds of billions in government contracts.

Anthropic said no.

In a tense week that sent shockwaves through Silicon Valley and beyond, Anthropic CEO Dario Amodei published a rare public statement: “These threats do not change our position: we cannot in good conscience accede to their request.” It’s a line that may go down in history – either as the principled stand that set the limit on AI militarization, or as the beginning of the end for the safety-first AI lab.

Welcome to the most consequential AI ethics battle of 2026.

What the Pentagon Actually Demanded

The specifics of the Department of Defense’s demands are more alarming than the headlines suggest. According to reporting by The Verge and confirmed by Amodei’s own statement, the Pentagon sought to remove two specific restrictions from Anthropic’s government contracts:

- Mass domestic surveillance. The ability to use Claude to automatically aggregate public data – movement records, web browsing histories, social associations – into comprehensive profiles of American citizens, without warrants, at scale.

- Fully autonomous lethal weapons. Giving AI systems the authority to select and engage human targets with zero human oversight in the loop.

Amodei did not frame this as anti-military. In fact, Anthropic has been remarkably hawkish by Silicon Valley standards: it was the first frontier AI company to deploy models on US government classified networks, the first to deploy at the National Laboratories, and Claude is already embedded across the DoD for intelligence analysis, operational planning, and cyber operations.

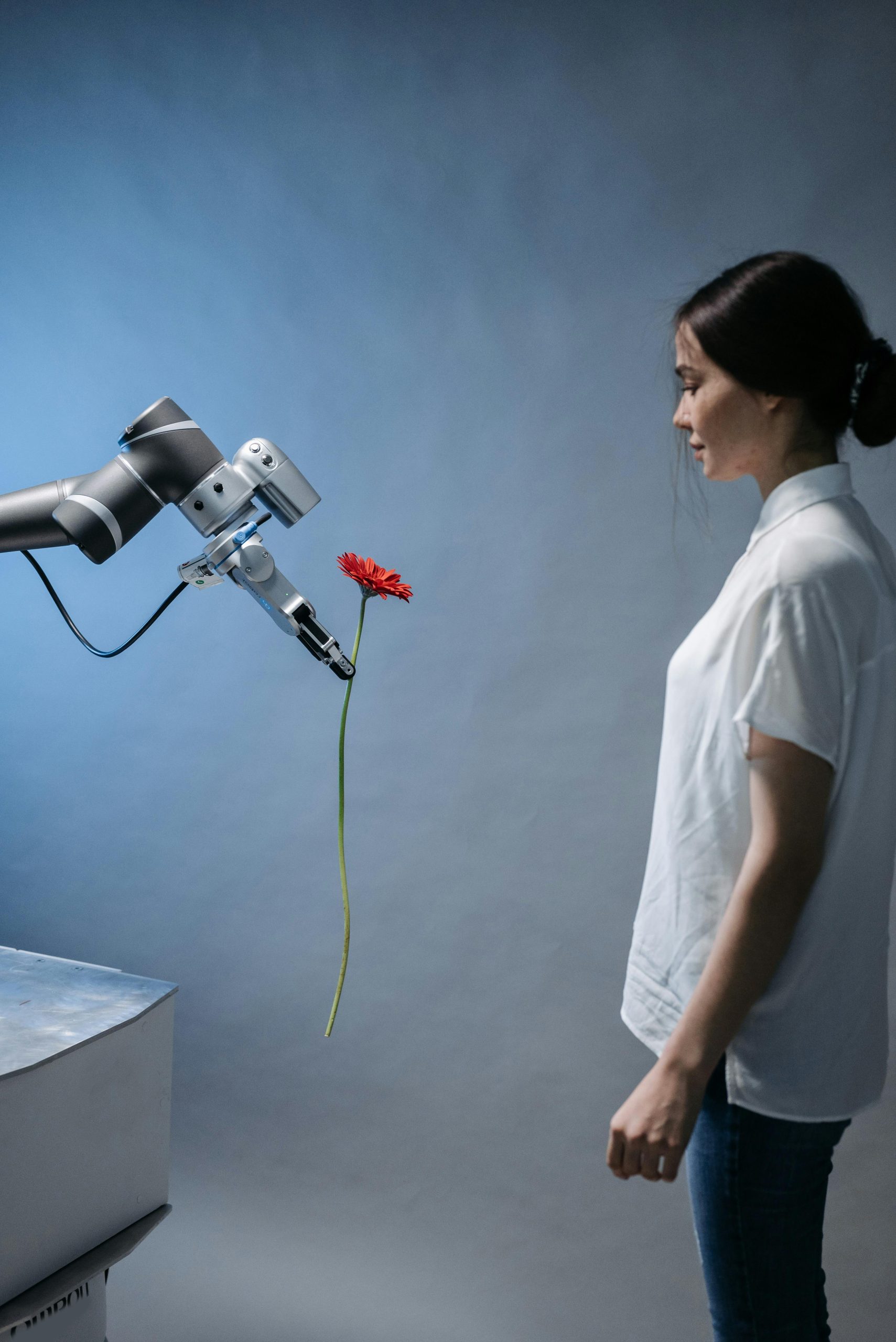

The line Anthropic drew is narrow but significant. “Partially autonomous weapons, like those used today in Ukraine, are vital to the defense of democracy,” Amodei wrote. “But today, frontier AI systems simply are not reliable enough” for fully autonomous kill decisions. He offered to partner with the DoD on R&D to improve reliability – an offer that was reportedly declined.

How Autonomous AI Warfare Already Looks in 2026

While this debate plays out in Washington, the hardware-and-software machinery of AI warfare is already being assembled. Palantir’s Maven Smart System (MSS) – acquired by NATO in 2025 – is an AI-powered decision support system that is, functionally, an AI-assisted targeting platform. A demo at AIPCon earlier this month showed Cameron Stanley, the Department of War’s Chief Digital and Artificial Intelligence Officer, demonstrating how any person or vehicle could be flagged for a military strike with three mouse clicks: “Left click, right click, left click.”

MSS uses satellite imagery analysis, AI detection models, and natural language queries to surface targets. A user can type “Show me detections of [Russian airframe type]” and the system queries tens of thousands of detection objects across all available satellite imagery in seconds. NATO has integrated the system with AI solutions from defense contractors in Germany, France, and the UK.

The distinction between “decision support” (human in the loop) and “fully autonomous” (human out of the loop) is paper-thin when the system is optimized to reduce the human decision to three clicks – and the Pentagon now wants AI labs to remove even that constraint.

OpenAI and xAI Already Said Yes – What That Means for Anthropic

Here’s where the competitive dynamics get brutal. According to reporting from the Washington Post and confirmed by industry sources, both OpenAI and xAI have already agreed to the Pentagon’s terms. OpenAI removed a ban on “military and warfare” use cases from its terms of service in 2024, then signed a deal with autonomous weapons maker Anduril, and subsequently reached agreement with the DoD on terms Anthropic refused.

This creates an impossible market dynamic. If the US government designates Anthropic a supply chain risk, the company could be locked out of the massive and growing federal AI market. The Pentagon alone is expected to spend over $1 trillion on AI capabilities through 2035. For an AI company that relies on large-scale deployment to fund the frontier model research it believes is essential for safe AI development, losing government access isn’t just a revenue problem – it’s potentially existential.

Meanwhile, Meta is spending $14.3 billion to acquire a 49% stake in Scale AI and hire its CEO Alexandr Wang to build what Zuckerberg has called “superintelligence.” Scale already has a Department of Defense contract for an AI agent program for US military planning. The message is clear: the AI-industrial-military complex is consolidating, and companies unwilling to remove safety guardrails face exclusion.

The Tech Worker Revolt – and Why It Might Not Matter

Organized groups representing 700,000 tech workers at Amazon, Google, Microsoft, and other companies have signed a joint statement demanding their employers reject the Pentagon’s demands. On the ground, the sentiment among employees is stark.

“When I joined the tech industry, I thought tech was about making people’s lives easier,” an Amazon Web Services employee told The Verge. “But now it seems like it’s all about making it easier to surveil and deport and kill people.”

In 2018, similar grassroots pressure worked: thousands of Google employees forced the company to end its original “Project Maven” Pentagon partnership. But 2026 is not 2018. The culture has shifted. Tech worker organizing has largely been suppressed, the political climate has changed, and the financial stakes are orders of magnitude larger. Most employees interviewed by The Verge saw little realistic chance of their employers pushing back against government demands.

Anthropic’s Dario Amodei is, by his own admission, not opposed to lethal autonomous weapons in principle – just not with today’s technology, which he calls insufficiently reliable. It’s a carefully calibrated position, and whether it holds under financial and political pressure remains to be seen.

Anthropic’s Responsible Scaling Policy v3: Progress or Retreat?

Adding another layer of complexity, Anthropic this week also released version 3.0 of its Responsible Scaling Policy – the voluntary framework the company uses to mitigate catastrophic AI risks.

The RSP, first introduced in 2023, uses a tiered “AI Safety Level” (ASL) system: as Claude becomes more capable of helping create weapons of mass destruction or enabling large-scale cyberattacks, progressively stricter safeguards kick in automatically. Version 3 refines these levels and adds transparency measures.

Critics, however, note that Anthropic simultaneously dropped its longtime safety pledge from the RSP language, a move The Verge described as accommodating competitive pressures in the AI race. The company says the update “reinforces what has worked well” and improves accountability – but the timing, in the middle of Pentagon negotiations, raises uncomfortable questions about what was given and what was taken.

The RSP covers things like whether Claude could provide “serious uplift” to someone trying to create a bioweapon, or enable a cyberattack on critical infrastructure. It does not appear to directly govern the autonomous weapons question – which is handled contractually, not through the scaling policy.

What’s Really at Stake: The Precedent, Not the Contract

Strip away the politics and this is a foundational question for AI in 2026: Who gets to decide when an AI system is safe enough to make kill decisions?

If the answer is “the customer” – in this case, the Department of War – then the AI safety guardrails that companies like Anthropic have spent years building become optional features, not fundamental constraints. Safety becomes a product differentiation strategy, not a moral floor. And in a competitive market where OpenAI and xAI have already agreed to remove those guardrails, the market pressure on any holdout is enormous.

If the answer is “the AI company,” then private companies are making decisions that should arguably be made democratically, with oversight, by elected representatives and international bodies. Dario Amodei is not a general. He was not elected. The legitimacy question cuts both ways.

And if the answer is “no one has decided yet, and that’s the problem,” then this standoff is what the reckoning looks like – messy, commercial, and deeply uncomfortable for everyone involved.

What Happens Next

The immediate stakes are clear: Anthropic is facing a potential designation as a “supply chain risk” that could wall it off from federal contracts. The company will either hold its current position, negotiate a middle ground, or – under sufficient financial pressure – quietly update its contract terms.

Longer term, the battle lines being drawn this week will shape the entire trajectory of AI governance. The 700,000-worker joint statement is an unusual show of force. Congressional scrutiny is building. And the international community – including NATO allies who are deploying Palantir’s Maven Smart System – is watching how American AI companies navigate the line between national security and autonomous killing.

Meanwhile, Palantir’s CEO Alex Karp has openly celebrated building “the most advanced war machine the world has ever seen.” OpenAI’s Sam Altman has signed defense contracts and removed usage restrictions. Elon Musk’s xAI is reportedly on board. Mark Zuckerberg is spending $14 billion to get into the game.

Anthropic is, for now, the last major American AI lab still saying “not yet.”

The Bottom Line

The Anthropic-Pentagon standoff is not just a contract dispute. It is a stress test of whether AI safety commitments survive contact with state power and financial pressure – in real time, in 2026, with the technology that actually exists today.

The outcome will set a precedent that reverberates through every AI lab boardroom, every DoD procurement office, and every country now developing its own AI military capabilities. Because if the United States normalizes fully autonomous lethal AI today, every other nation will follow.

Dario Amodei’s statement – published on what his company explicitly named the page “statement-department-of-war,” a deliberate and pointed choice of URL – may be the most important document in AI policy so far this year.

Whether it’s also the last word is a different question entirely.

What do you think – should AI companies have the right to impose safety limits on military contracts, or does national security override private ethics decisions? Leave a comment below, or subscribe to UnbreakableCloud for daily AI analysis you can actually use.